1. import_table介紹

2. Load Data 與 import table功能示例上期技術(shù)分享我們介紹了MySQL Load Data的4種常用的方法將文本數(shù)據(jù)導(dǎo)入到MySQL,這一期我們繼續(xù)介紹另一款更加高效的數(shù)據(jù)導(dǎo)入工具,MySQL Shell 工具集中的

import_table,該工具的全稱(chēng)是Parallel Table Import Utility,顧名思義,支持并發(fā)數(shù)據(jù)導(dǎo)入,該工具在MySQL Shell 8.0.23版本后,功能更加完善, 以下列舉該工具的核心功能

- 基本覆蓋了MySQL Data Load的所有功能,可以作為替代品使用

- 默認(rèn)支持并發(fā)導(dǎo)入(支持自定義chunk大小)

- 支持通配符匹配多個(gè)文件同時(shí)導(dǎo)入到一張表(非常適用于相同結(jié)構(gòu)數(shù)據(jù)匯總到一張表)

- 支持限速(對(duì)帶寬使用有要求的場(chǎng)景,非常合適)

- 支持對(duì)壓縮文件處理

- 支持導(dǎo)入到5.7及以上MySQL

該部分針對(duì)import table和Load Data相同的功能做命令示例演示,我們依舊以導(dǎo)入employees表的示例數(shù)據(jù)為例,演示MySQL Load Data的綜合場(chǎng)景

- 數(shù)據(jù)自定義順序?qū)?/li>

- 數(shù)據(jù)函數(shù)處理

- 自定義數(shù)據(jù)取值

|

|

## 示例數(shù)據(jù)如下 |

|

|

[root@10-186-61-162 tmp]# cat employees_01.csv |

|

|

"10001","1953-09-02","Georgi","Facello","M","1986-06-26" |

|

|

"10003","1959-12-03","Parto","Bamford","M","1986-08-28" |

|

|

"10002","1964-06-02","Bezalel","Simmel","F","1985-11-21" |

|

|

"10004","1954-05-01","Chirstian","Koblick","M","1986-12-01" |

|

|

"10005","1955-01-21","Kyoichi","Maliniak","M","1989-09-12" |

|

|

"10006","1953-04-20","Anneke","Preusig","F","1989-06-02" |

|

|

"10007","1957-05-23","Tzvetan","Zielinski","F","1989-02-10" |

|

|

"10008","1958-02-19","Saniya","Kalloufi","M","1994-09-15" |

|

|

"10009","1952-04-19","Sumant","Peac","F","1985-02-18" |

|

|

"10010","1963-06-01","Duangkaew","Piveteau","F","1989-08-24" |

|

|

|

|

|

## 示例表結(jié)構(gòu) |

|

|

10.186.61.162:3306 employees SQL > desc emp; |

|

|

+-------------+---------------+------+-----+---------+-------+ |

|

|

| Field | Type | Null | Key | Default | Extra | |

|

|

+-------------+---------------+------+-----+---------+-------+ |

|

|

| emp_no | int | NO | PRI | NULL | | |

|

|

| birth_date | date | NO | | NULL | | |

|

|

| first_name | varchar(14) | NO | | NULL | | |

|

|

| last_name | varchar(16) | NO | | NULL | | |

|

|

| full_name | varchar(64) | YES | | NULL | | -- 表新增字段,導(dǎo)出數(shù)據(jù)文件中不存在 |

|

|

| gender | enum('M','F') | NO | | NULL | | |

|

|

| hire_date | date | NO | | NULL | | |

|

|

| modify_date | datetime | YES | | NULL | | -- 表新增字段,導(dǎo)出數(shù)據(jù)文件中不存在 |

|

|

| delete_flag | varchar(1) | YES | | NULL | | -- 表新增字段,導(dǎo)出數(shù)據(jù)文件中不存在 |

|

|

+-------------+---------------+------+-----+---------+-------+ |

具體參數(shù)含義不做說(shuō)明,需要了解語(yǔ)法規(guī)則及含義可查看系列上一篇文章<MySQL Load Data的多種用法>

|

|

load data infile '/data/mysql/3306/tmp/employees_01.csv' |

|

|

into table employees.emp |

|

|

character set utf8mb4 |

|

|

fields terminated by ',' |

|

|

enclosed by '"' |

|

|

lines terminated by '\n' |

|

|

(@C1,@C2,@C3,@C4,@C5,@C6) |

|

|

set emp_no=@C1, |

|

|

birth_date=@C2, |

|

|

first_name=upper(@C3), |

|

|

last_name=lower(@C4), |

|

|

full_name=concat(first_name,' ',last_name), |

|

|

gender=@C5, |

|

|

hire_date=@C6 , |

|

|

modify_date=now(), |

|

|

delete_flag=if(hire_date<'1988-01-01','Y','N'); |

|

|

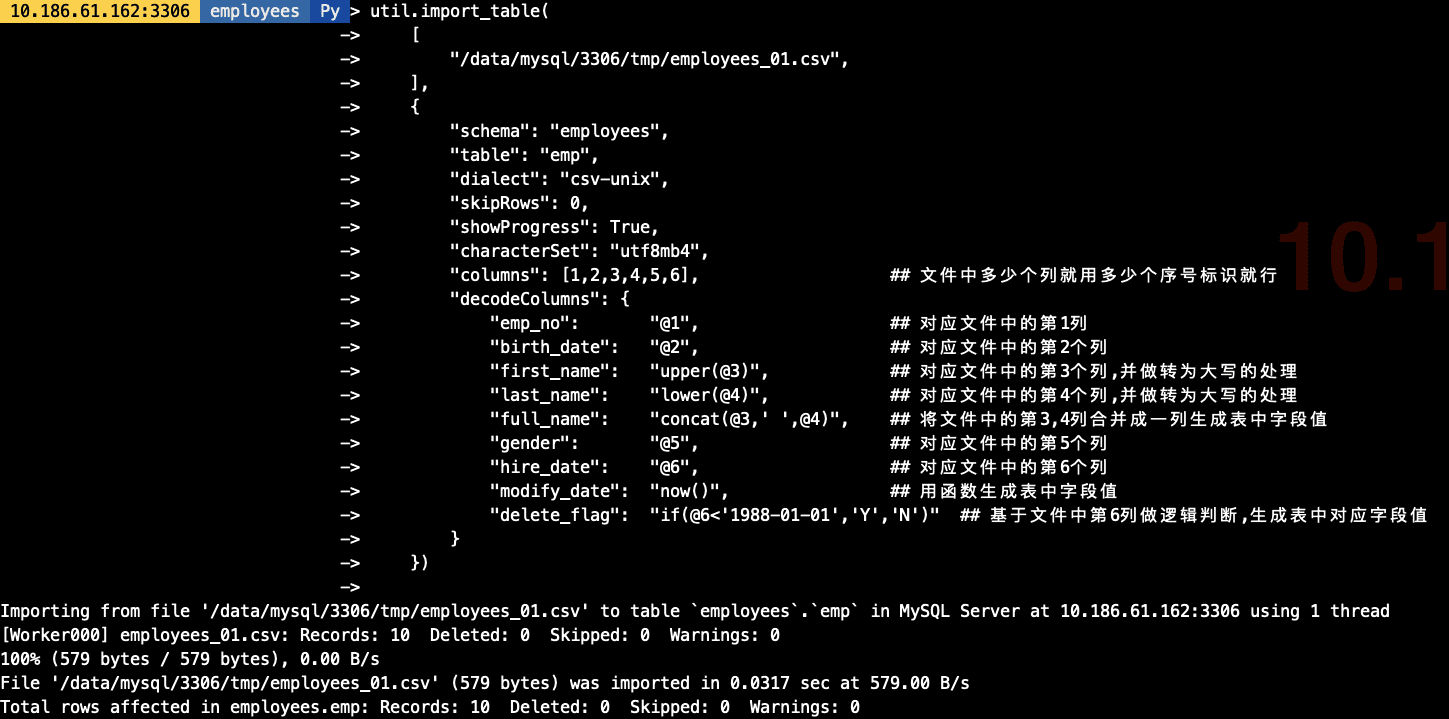

util.import_table( |

|

|

[ |

|

|

"/data/mysql/3306/tmp/employees_01.csv", |

|

|

], |

|

|

{ |

|

|

"schema": "employees", |

|

|

"table": "emp", |

|

|

"dialect": "csv-unix", |

|

|

"skipRows": 0, |

|

|

"showProgress": True, |

|

|

"characterSet": "utf8mb4", |

|

|

"columns": [1,2,3,4,5,6], ## 文件中多少個(gè)列就用多少個(gè)序號(hào)標(biāo)識(shí)就行 |

|

|

"decodeColumns": { |

|

|

"emp_no": "@1", ## 對(duì)應(yīng)文件中的第1列 |

|

|

"birth_date": "@2", ## 對(duì)應(yīng)文件中的第2個(gè)列 |

|

|

"first_name": "upper(@3)", ## 對(duì)應(yīng)文件中的第3個(gè)列,并做轉(zhuǎn)為大寫(xiě)的處理 |

|

|

"last_name": "lower(@4)", ## 對(duì)應(yīng)文件中的第4個(gè)列,并做轉(zhuǎn)為大寫(xiě)的處理 |

|

|

"full_name": "concat(@3,' ',@4)", ## 將文件中的第3,4列合并成一列生成表中字段值 |

|

|

"gender": "@5", ## 對(duì)應(yīng)文件中的第5個(gè)列 |

|

|

"hire_date": "@6", ## 對(duì)應(yīng)文件中的第6個(gè)列 |

|

|

"modify_date": "now()", ## 用函數(shù)生成表中字段值 |

|

|

"delete_flag": "if(@6<'1988-01-01','Y','N')" ## 基于文件中第6列做邏輯判斷,生成表中對(duì)應(yīng)字段值 |

|

|

} |

|

|

}) |

|

|

## 在導(dǎo)入前我生成好了3分單獨(dú)的employees文件,導(dǎo)出的結(jié)構(gòu)一致 |

|

|

[root@10-186-61-162 tmp]# ls -lh |

|

|

總用量 1.9G |

|

|

-rw-r----- 1 mysql mysql 579 3月 24 19:07 employees_01.csv |

|

|

-rw-r----- 1 mysql mysql 584 3月 24 18:48 employees_02.csv |

|

|

-rw-r----- 1 mysql mysql 576 3月 24 18:48 employees_03.csv |

|

|

-rw-r----- 1 mysql mysql 1.9G 3月 26 17:15 sbtest1.csv |

|

|

|

|

|

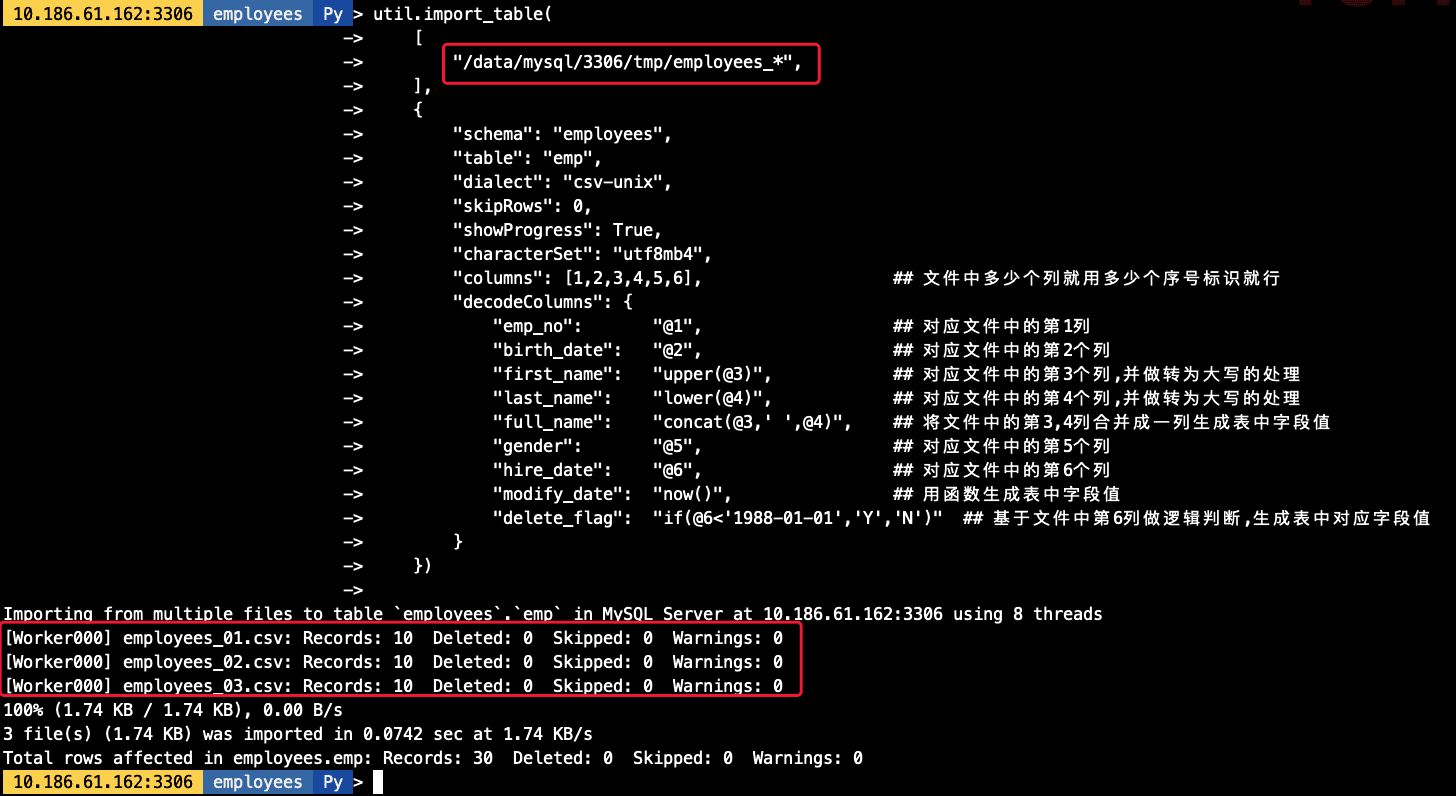

## 導(dǎo)入命令,其中對(duì)對(duì)文件用employees_*做模糊匹配 |

|

|

util.import_table( |

|

|

[ |

|

|

"/data/mysql/3306/tmp/employees_*", |

|

|

], |

|

|

{ |

|

|

"schema": "employees", |

|

|

"table": "emp", |

|

|

"dialect": "csv-unix", |

|

|

"skipRows": 0, |

|

|

"showProgress": True, |

|

|

"characterSet": "utf8mb4", |

|

|

"columns": [1,2,3,4,5,6], ## 文件中多少個(gè)列就用多少個(gè)序號(hào)標(biāo)識(shí)就行 |

|

|

"decodeColumns": { |

|

|

"emp_no": "@1", ## 對(duì)應(yīng)文件中的第1列 |

|

|

"birth_date": "@2", ## 對(duì)應(yīng)文件中的第2個(gè)列 |

|

|

"first_name": "upper(@3)", ## 對(duì)應(yīng)文件中的第3個(gè)列,并做轉(zhuǎn)為大寫(xiě)的處理 |

|

|

"last_name": "lower(@4)", ## 對(duì)應(yīng)文件中的第4個(gè)列,并做轉(zhuǎn)為大寫(xiě)的處理 |

|

|

"full_name": "concat(@3,' ',@4)", ## 將文件中的第3,4列合并成一列生成表中字段值 |

|

|

"gender": "@5", ## 對(duì)應(yīng)文件中的第5個(gè)列 |

|

|

"hire_date": "@6", ## 對(duì)應(yīng)文件中的第6個(gè)列 |

|

|

"modify_date": "now()", ## 用函數(shù)生成表中字段值 |

|

|

"delete_flag": "if(@6<'1988-01-01','Y','N')" ## 基于文件中第6列做邏輯判斷,生成表中對(duì)應(yīng)字段值 |

|

|

} |

|

|

}) |

|

|

|

|

|

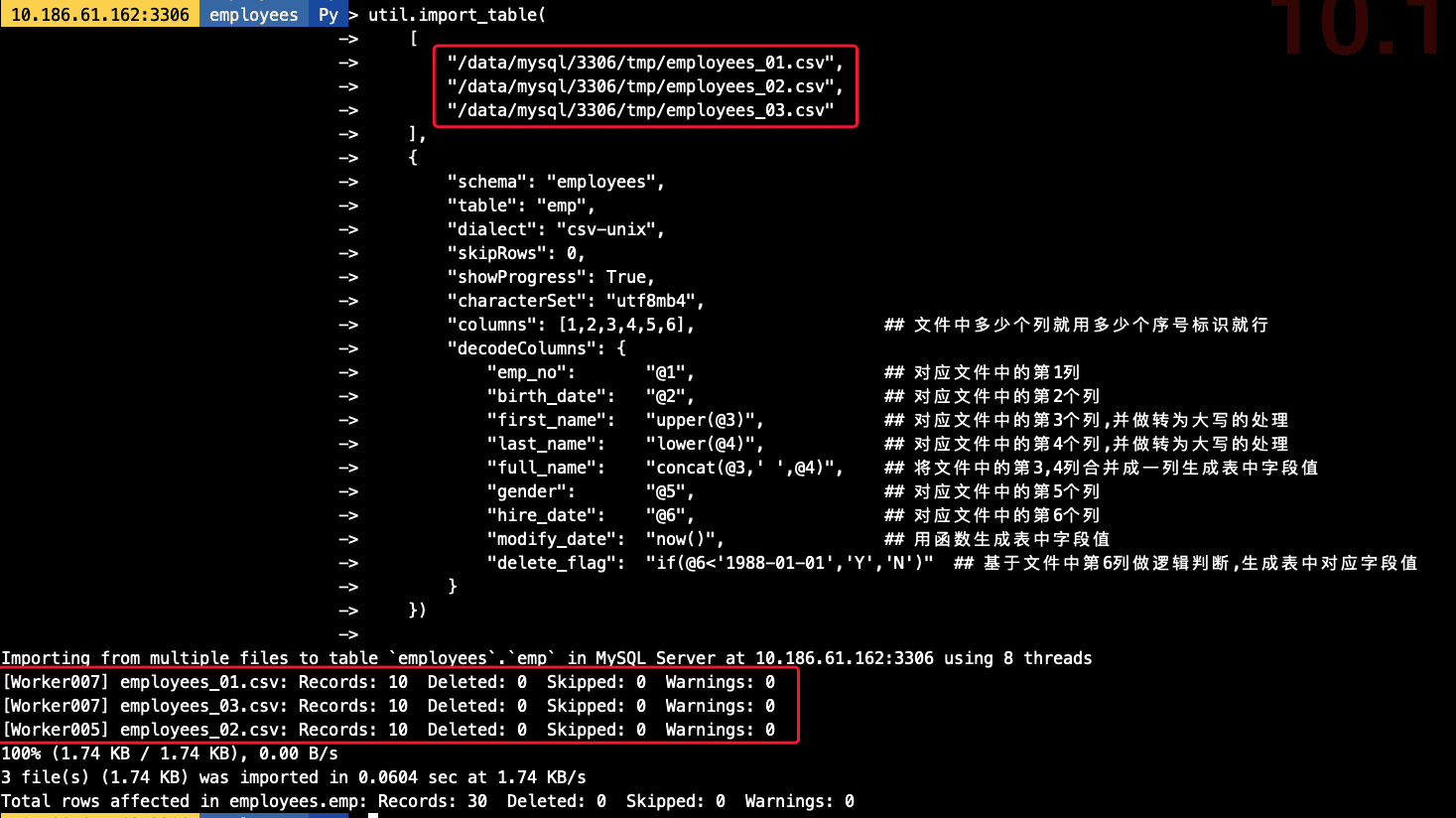

## 導(dǎo)入命令,其中對(duì)要導(dǎo)入的文件均明確指定其路徑 |

|

|

util.import_table( |

|

|

[ |

|

|

"/data/mysql/3306/tmp/employees_01.csv", |

|

|

"/data/mysql/3306/tmp/employees_02.csv", |

|

|

"/data/mysql/3306/tmp/employees_03.csv" |

|

|

], |

|

|

{ |

|

|

"schema": "employees", |

|

|

"table": "emp", |

|

|

"dialect": "csv-unix", |

|

|

"skipRows": 0, |

|

|

"showProgress": True, |

|

|

"characterSet": "utf8mb4", |

|

|

"columns": [1,2,3,4,5,6], ## 文件中多少個(gè)列就用多少個(gè)序號(hào)標(biāo)識(shí)就行 |

|

|

"decodeColumns": { |

|

|

"emp_no": "@1", ## 對(duì)應(yīng)文件中的第1列 |

|

|

"birth_date": "@2", ## 對(duì)應(yīng)文件中的第2個(gè)列 |

|

|

"first_name": "upper(@3)", ## 對(duì)應(yīng)文件中的第3個(gè)列,并做轉(zhuǎn)為大寫(xiě)的處理 |

|

|

"last_name": "lower(@4)", ## 對(duì)應(yīng)文件中的第4個(gè)列,并做轉(zhuǎn)為大寫(xiě)的處理 |

|

|

"full_name": "concat(@3,' ',@4)", ## 將文件中的第3,4列合并成一列生成表中字段值 |

|

|

"gender": "@5", ## 對(duì)應(yīng)文件中的第5個(gè)列 |

|

|

"hire_date": "@6", ## 對(duì)應(yīng)文件中的第6個(gè)列 |

|

|

"modify_date": "now()", ## 用函數(shù)生成表中字段值 |

|

|

"delete_flag": "if(@6<'1988-01-01','Y','N')" ## 基于文件中第6列做邏輯判斷,生成表中對(duì)應(yīng)字段值 |

|

|

} |

|

|

}) |

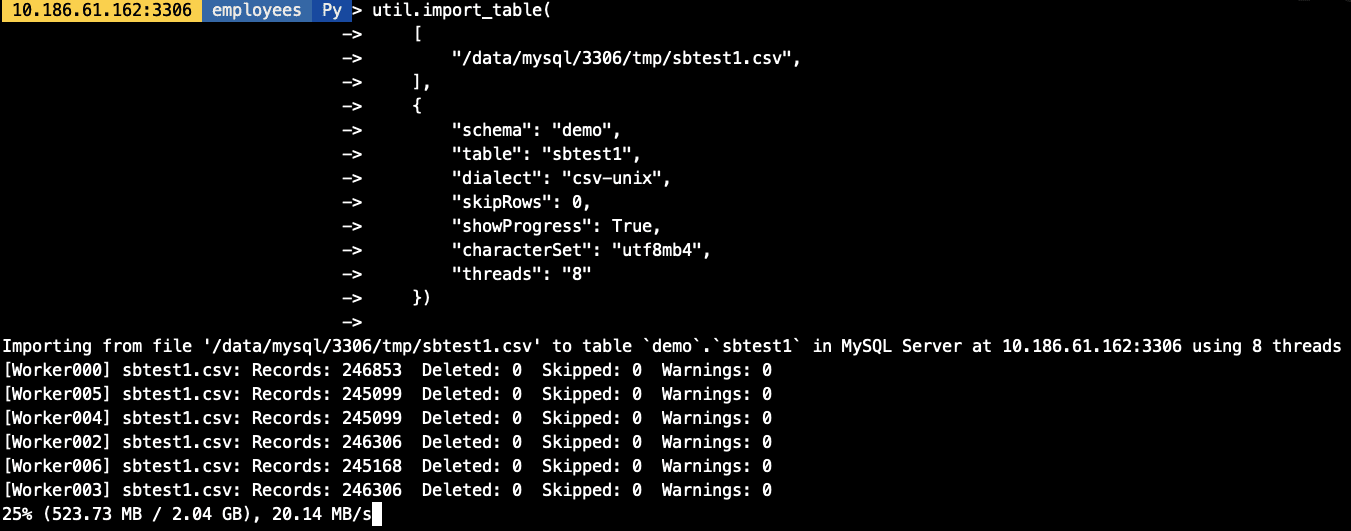

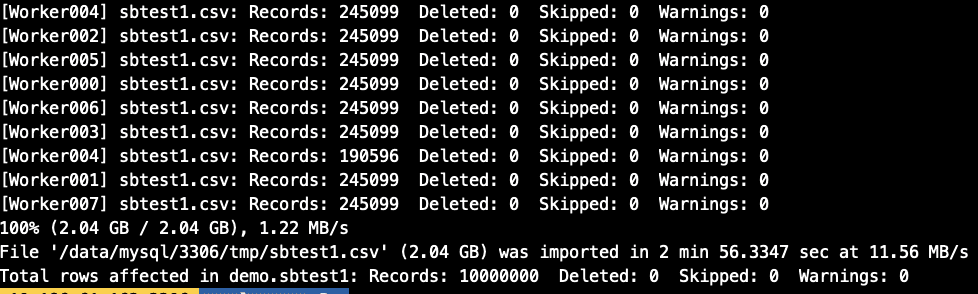

在實(shí)驗(yàn)并發(fā)導(dǎo)入前我們創(chuàng)建一張1000W的sbtest1表(大約2G數(shù)據(jù)),做并發(fā)模擬,import_table用

threads參數(shù)作為并發(fā)配置, 默認(rèn)為8個(gè)并發(fā).

|

|

|

|

|

[] |

|

|

總用量 1.9G |

|

|

-rw-r----- 1 mysql mysql 579 3月 24 19:07 employees_01.csv |

|

|

-rw-r----- 1 mysql mysql 584 3月 24 18:48 employees_02.csv |

|

|

-rw-r----- 1 mysql mysql 576 3月 24 18:48 employees_03.csv |

|

|

-rw-r----- 1 mysql mysql 1.9G 3月 26 17:15 sbtest1.csv |

|

|

|

|

|

|

|

|

util.import_table( |

|

|

[ |

|

|

, |

|

|

], |

|

|

{ |

|

|

"schema": "demo", |

|

|

"table": "sbtest1", |

|

|

"dialect": "csv-unix", |

|

|

"skipRows": 0, |

|

|

"showProgress": True, |

|

|

"characterSet": "utf8mb4", |

|

|

"threads": "8" |

|

|

}) |

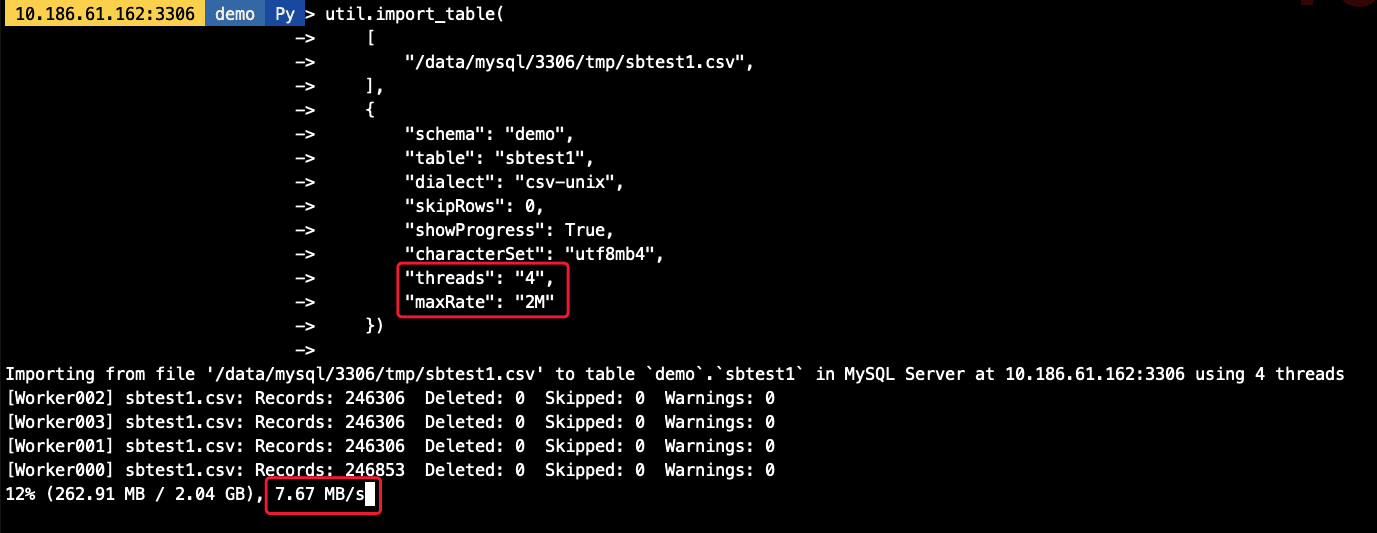

可以通過(guò)

maxRate和threads來(lái)控制每個(gè)并發(fā)線程的導(dǎo)入數(shù)據(jù),如,當(dāng)前配置線程為4個(gè),每個(gè)線程的速率為2M/s,則最高不會(huì)超過(guò)8M/s

|

|

util.import_table( |

|

|

[ |

|

|

, |

|

|

], |

|

|

{ |

|

|

"schema": "demo", |

|

|

"table": "sbtest1", |

|

|

"dialect": "csv-unix", |

|

|

"skipRows": 0, |

|

|

"showProgress": True, |

|

|

"characterSet": "utf8mb4", |

|

|

"threads": "4", |

|

|

"maxRate": "2M" |

|

|

}) |

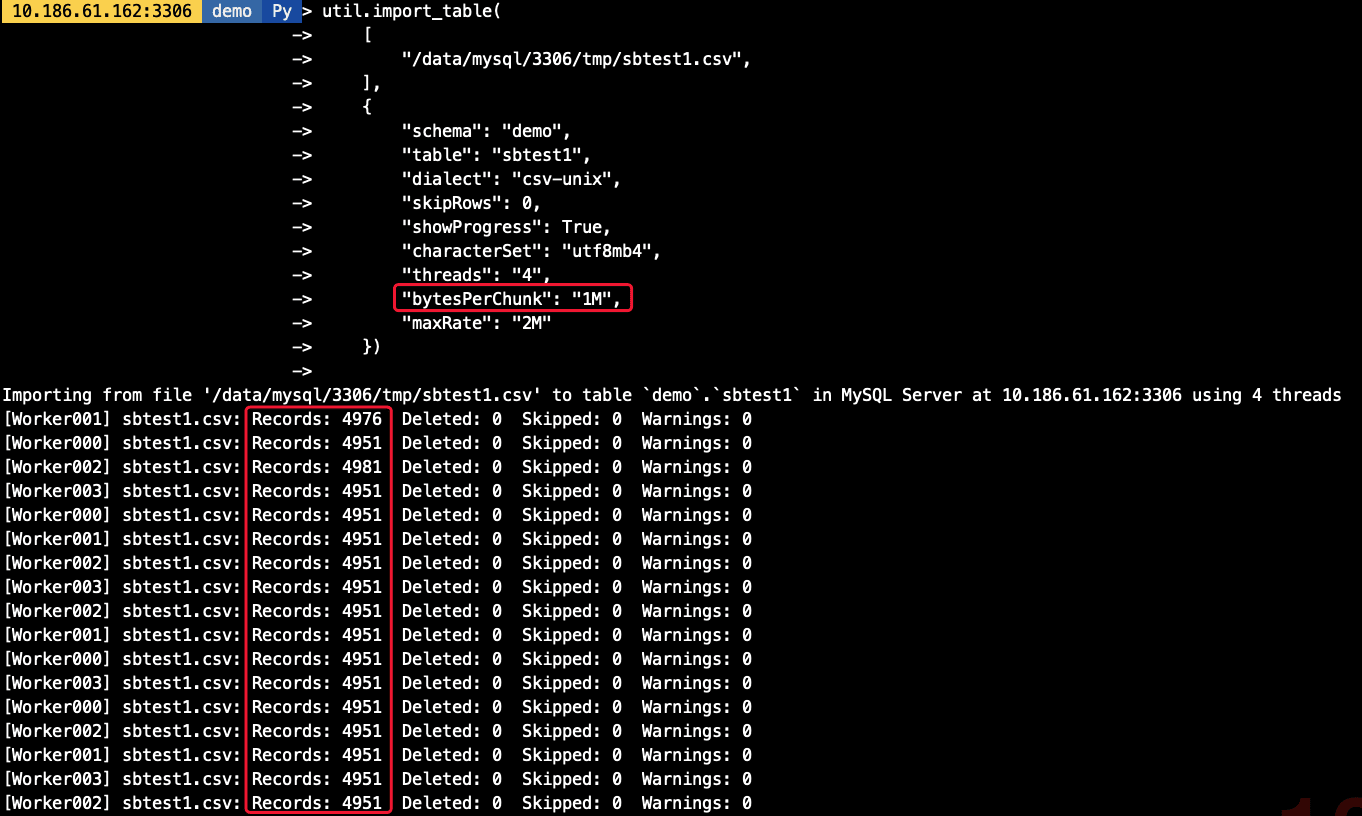

默認(rèn)的chunk大小為50M,我們可以調(diào)整chunk的大小,減少事務(wù)大小,如我們將chunk大小調(diào)整為1M,則每個(gè)線程每次導(dǎo)入的數(shù)據(jù)量也相應(yīng)減少

|

|

util.import_table( |

|

|

[ |

|

|

, |

|

|

], |

|

|

{ |

|

|

"schema": "demo", |

|

|

"table": "sbtest1", |

|

|

"dialect": "csv-unix", |

|

|

"skipRows": 0, |

|

|

"showProgress": True, |

|

|

"characterSet": "utf8mb4", |

|

|

"threads": "4", |

|

|

"bytesPerChunk": "1M", |

|

|

"maxRate": "2M" |

|

|

}) |

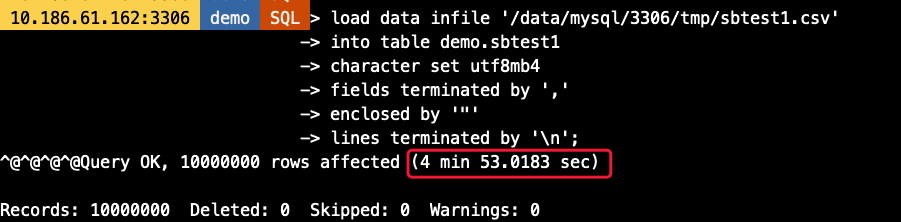

- 使用相同庫(kù)表

- 不對(duì)數(shù)據(jù)做特殊處理,原樣導(dǎo)入

- 不修改參數(shù)默認(rèn)值,只指定必備參數(shù)

|

|

-- Load Data語(yǔ)句 |

|

|

load data infile '/data/mysql/3306/tmp/sbtest1.csv' |

|

|

into table demo.sbtest1 |

|

|

character set utf8mb4 |

|

|

fields terminated by ',' |

|

|

enclosed by '"' |

|

|

lines terminated by '\n' |

|

|

|

|

|

-- import_table語(yǔ)句 |

|

|

util.import_table( |

|

|

[ |

|

|

, |

|

|

], |

|

|

{ |

|

|

"schema": "demo", |

|

|

"table": "sbtest1", |

|

|

"dialect": "csv-unix", |

|

|

"skipRows": 0, |

|

|

"showProgress": True, |

|

|

"characterSet": "utf8mb4" |

|

|

}) |

5. 技術(shù)總結(jié)可以看到,Load Data耗時(shí)約5分鐘,而import_table則只要不到一半的時(shí)間即可完成數(shù)據(jù)導(dǎo)入,效率高一倍以上(虛擬機(jī)環(huán)境磁盤(pán)IO能力有限情況下)

- import_table包含了Load Data幾乎所有的功能

- import_table導(dǎo)入的效率比Load Data更高

- import_table支持對(duì)導(dǎo)入速度,并發(fā)以及每次導(dǎo)入的數(shù)據(jù)大小做精細(xì)控制

-

import_table的導(dǎo)入進(jìn)度報(bào)告更加詳細(xì),便于排錯(cuò)及時(shí)間評(píng)估,包括

- 導(dǎo)入速度

- 導(dǎo)入總耗時(shí)

- 每批次導(dǎo)入的數(shù)據(jù)量,是否存在Warning等等

- 導(dǎo)入最終的匯總報(bào)告

到此這篇關(guān)于MySQL import_table數(shù)據(jù)導(dǎo)入的實(shí)現(xiàn)的文章就介紹到這了,更多相關(guān)MySQL import_table數(shù)據(jù)導(dǎo)入內(nèi)容請(qǐng)搜索服務(wù)器之家以前的文章或繼續(xù)瀏覽下面的相關(guān)文章希望大家以后多多支持服務(wù)器之家!

原文鏈接:https://www.cnblogs.com/zhenxing/p/15102252.html